Although deepfake technologies are still in their infancy, falling for scams utilizing them can cost you millions. The good news is that there are steps you can take to make yourself and your company more challenging targets for deepfake attackers.

Generative AI technologies are getting more realistic as days go by. Many times, they can be used for good. But, as we learned in our recent article on generative AI and deepfake statistics, cybercriminals also use them to carry out nefarious activities. Unfortunately for one multinational financial firm, its employees learned (the hard way) what happens when cybercriminals use deepfake media to defraud it.

According to CNN, a finance worker at an unnamed multinational organization was duped into forking out the equivalent of nearly $26 million (USD) as part of an elaborate spearphishing campaign. Attackers used deepfake technology to impersonate several members of the organization, including the company’s chief financial officer (CFO), in a video conference with the worker.

Hong Kong Free Press reports that the deepfake scammers used publicly available audio and video recordings to create digital fakes of the financial firm’s U.K.-based CFO and other staff. The article states that “The deepfake videos were prerecorded and didn’t involve dialogue or interaction with the victim.” (Whoops… That was a big red flag.)

However, believing the request to be legitimate, the employee transferred $200 million Hong Kong dollars to several bank accounts via 15 transactions. The employee didn’t know anything was amiss until they checked in with the company’s main office. But once they realized what had happened, it was too late, and the attackers (along with the money) were gone.

So, what can your business do to prevent deepfake technologies from being used to breach or defraud your organization?

Let’s hash it out.

What You Can Do to Protect Your Business Against Deepfake Scammers

Deepfakes are synthetic media (images, audio, or video recordings) created by using artificial intelligence (AI) machine learning to look and sound like a specific person. This enables someone to make the subject of the deepfake do or say something they didn’t.

When done with someone’s consent (like Cadbury did with Indian actor and brand ambassador Shahrukh Khan), then it’s a useful tool for businesses. But when it’s done to create harmful media — i.e., creating non-consensual pornographic images or videos of celebrities that defame their characters, or carrying out phishing attacks to steal money or get fraudulent access to sensitive data — then it’s something that every organization needs to understand how to combat.

In a related article on the dangers of generative AI, we shared about new and ongoing global initiatives that aim to protect organizations and consumers against the malicious use of generative AI technologies. This includes the creation of C2PA (i.e., the Coalition for Content Provenance and Authenticity), a technical standard that aims to help consumers distinguish authentic media from deepfakes using public key cryptography.

But many of these efforts are still in the early stages. When protecting your business or organization against deepfake scams, you need ways to fight back now. The good news is that there are steps you can take to help secure your organization from threat actors employing these tools, and the majority start with hardening your “human firewall.”

1. Educate Your Employees to Recognize the Signs of a Deepfake

A big first step to protect your organization against deepfake-wielding cybercriminals is to educate your employees about these threats. Provide your employees with the means to learn how to recognize deepfake media and practice doing so. Show them examples and teach them what to look out for.

For example, train your employees to do the following:

- Ask questions. If you find yourself in a suspicious phone call or video conversation, ask questions to gauge the other person’s response. If an attacker is using a pre-recorded message, it’ll be a big red flag when you ask a question, and the other party either doesn’t respond or continues speaking like you haven’t said anything.

- Take a breath and pause to think critically about the situation or request. Carefully evaluate what’s being said from a logical standpoint. Does the request make sense? Think about the motivation of the message. Is the message geared to elicit an emotional reaction or create a sense of urgency? Is the person asking you to do something unusual or out of the ordinary? Would it make sense to come to you with such a request? Did the person ask you not to say anything about their request to anyone else?

- Evaluate the speaker. If you know the other party, ask yourself whether they sound like the authentic person. And we don’t just mean the sound of their voice; rather, the way they say things as well (i.e., their phrasing and choice of words, the tone or inflection of their speech, and speech pattern).

- Look for inconsistencies in the audio or video. Look and listen carefully, as you might observeabnormalities in the audio or video that can give away that a person isn’t who they claim to be. Do they look different? Are their facial movements natural or do they seem “off” somehow? Do their voices and mouth movements match up? Do they appear to have extra fingers, toes, or other anomalies? Does it look like a filter is being used? Are there mismatches as far as lighting or skin tone goes in certain parts of the image or video?

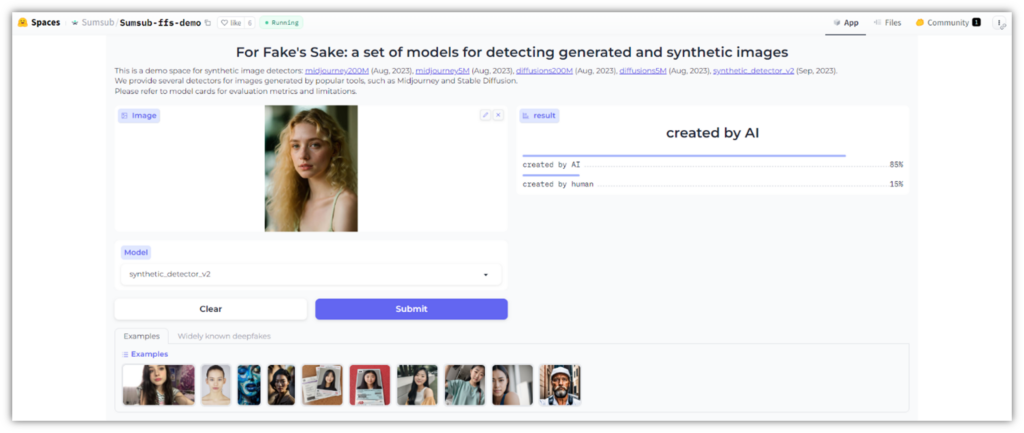

Free tools like the Spot the Deepfake quiz on SpotDeepfakes.org to see whether your employees know how to apply what they’ve learned to identify fake media. You also can use an open tool like Sumsub’s For Fake’s Sake AI detection demo tool as another method of checking for generative AI image content. Here’s what it looks like when testing an image using For Fake’s Sake:

Of course, teaching them what to look out for isn’t your only defense against deepfake media. As AI technologies advance, those fake media will become harder to distinguish…

2. Teach Employees to Trust Their Guts (If Something Seems Fishy, It Probably Is)

As a human being, your intuition or “gut instinct” is nature’s way of telling you that you might be in a bad or dangerous situation. You get that gnawing, gut feeling that you can’t seem to shake. If you order a shared ride service but get a weird or bad feeling about the person who comes to pick you up, you wouldn’t just get into the car, right? The same concept applies to obliging the request of a scammer.

Voice cloning technologies have made incredible advancements in recent years. If anything seems “off” or suspicious about a phone call, teach your employees to stop communications immediately and call the legitimate person directly using an official company phone number. (Don’t call back on a provided phone number.)

(Of course, the same concept applies to emails. If you get an unexpected urgent email or text message asking you to do something, never take it at face value and always reach out to the person in question via other official channels.)

Cybercriminals love to use social engineering to trick and manipulate people into doing things they shouldn’t. They create a sense of urgency to spur their targets to action. These scammers often aim to get their hands on sensitive personal data or login credentials. Or, like the situation with the multinational firm in Hong Kong we mentioned earlier, bad guys have your money on their minds.

This is why having employees who exercise healthy skepticism can go a long way in helping prevent your company from landing in hot water. Teach your employees to keep their emotions in check and not take a social engineer’s bait.

3. Implement Internal Policies, Processes, and Security Technologies to Fight Deepfakes

Deepfake technologies don’t just take advantage of vulnerabilities in your “human firewall.” They also can be used to exploit vulnerabilities in your security technologies and processes as well. This is why it’s crucial to implement documented policies, processes, and procedures to enhance your cyber security.

Consider implementing policies that require all important requests (e.g., large wire transfers) to be verified by a known good communication channel, using process that’s not initiated by the requester. This could also include the use of a secret passphrase or pass code (more on that a little later).

From an IT perspective, one way to do this is to implement user verification policies for account security. For example, you can require all IT service members to adhere to a user verification policy before resetting passwords. Also, be sure to use multi-factor authentication mechanisms (think of tools like Okta and Google Authenticator) as part of this to help thwart low-level attackers.

It’s important to note that not all identity verification technologies are impervious to deepfakes. For example, researchers at Penn State University discovered that some facial recognition applications’ user detection technologies (particularly the facial liveness verification feature) were vulnerable to deepfakes. This means that even the authentication technologies we rely on to secure our mobile devices, for example, may be exploited by deepfake scammers if given a chance.

Knowing this, what can you do to mitigate deepfake-related risks when it comes to securely authenticating your employees?

Use PKI to Your Advantage to Authenticate Users

Use digital certificate-based authentication to verify your employees’ digital identities using public key cryptography. You can do this by using a personal authentication certificate in lieu of facial recognition systems (ideally in combination with other zero-trust procedures) to help prevent deepfake technologies from being used to access your systems.

Add Digital Signatures to Your Company’s Digital Media

Prove the provenance of your organization’s digital media files (i.e., origin info and modifications) using a cryptographic digital signature that meets C2PA or Content Authenticity Initiative (CAI) standards (they’re closely related standards). The C2PA initiative enables you to create media digital provenance by attaching cryptographic digital signatures to your files (images, video, and audio). This cryptographic signature provides assurance that your media is authentic and hasn’t been faked.

Does this stop deepfake scammers from repurposing your content for nefarious purposes? No. But what it does is help establish digital trust with customers and other users. It also gives you the means to add a Training and Data Mining assertion (i.e., allowed, notAllowed, or constrained) to your digital assets that:

- states whether your content may be used for AI/ML training or data mining-related purposes,

- specifies under what conditions it is allowed, or

- instructs whether permission is required for such usage.

In November, an alphabet soup of agencies — the National Security Agency (NSA), Federal Bureau of Investigation (FBI) and Cybersecurity Infrastructure and Security Agency (CISA) — published a joint report on the growing threats of deepfake media. This resource mentions the use of multimodal models that can “lift a 2D image to 3D to enable realistic generation of video based on a single image.”

Likewise, cybercriminals can now generate fake audio clips that emulate a person’s vocal characteristics using just a few seconds worth (as little as 3 seconds, according to Microsoft) of a recorded voice sample. They can then use this to “clone it and use a text-to-speech API to generate authentic fake voices.”

Now, imagine what they can do if they have many data clips at their disposal (like, say, recordings of a politician or your company’s CEO speaking at events)…

Take steps now to secure your media against those who wish to misuse it.

4. Implement a Secret Transaction Code or Phrase That Changes Daily

When I was a kid, my mom taught me a secret phrase that only we knew in case of an emergency. If someone other than one of my parents came to pick me up from school, if they didn’t know the secret phrase, then I knew not to get into the car with that person.

Likewise, businesses can implement a similar technique when it comes to making financial transactions. You could create a new code phrase each day for employees to know when requesting or carrying out financial transactions. This secret phrase could apply to all financial transactions, or you could make it a rule that it would apply only to transactions over a certain dollar amount.

If the requester doesn’t know the code, then you know something’s wrong. (Of course, this implies that you need to be sure scammers don’t have any way to find out the code. Scammers may use hacking or social engineering to get an employee to disclose the code.)

One way to create this code system could be by using something akin to the Electronic Frontier Foundation’s dice-generated passphrase creation method. The gift of it is that you generate the daily code by rolling a set number of dice and then using the rolled numbers to find individual words on a long list of words. This approach can serve as a good starting point to create your own version of a similar system. It doesn’t necessarily matter what method you use, just so long as you achieve two things:

- create a unique passphrase that’s virtually impossible to guess, and

- communicate it using secure channels to keep bad guys from getting their hands on it.

You could post this daily code on your organization’s secure intranet and require users to authenticate to it using PKI-based digital identities and other digital identity verification methods (e.g., an authenticator app). Just be sure to properly manage those certificates and their cryptographic keys to avoid them falling into the wrong hands.

Why use a daily secret code? The thought process here is that changing it weekly might not be enough, as a bad guy could use a phishing attack to gain access to your system and discover your secret phrase. While doing this may seem like an inconvenience, doing so is a lot less painful or convenient than dealing with the aftermath of a data breach or theft of millions of dollars.

5. Consider Investing in Advanced Threat Detection Solutions

Although this option may not be viable for smaller organizations due to costs, threat detection tools may be worthwhile investments for enterprises or other large organizations. Although they’re not perfect, using threat detection solutions adds another layer to your organization’s cybersecurity defenses. More advanced threat detection tools use AI and ML technologies to detect and expose falsified images, audio, and video media.

However, as explained in an article by Carnegie Mellon University, these tools still have a long way to go in terms of achieving reliable accuracy:

“A recent comparative analysis of the performance of seven state-of-the-art detectors on five public datasets that are often used in the field showed a wide range of accuracies, from 30 percent to 97 percent, with no single detector being significantly better than another. The detectors typically had wide-ranging accuracies across the five test datasets. Typically, the detectors will be tuned to look for a certain type of manipulation, and often when these detectors are turned to novel data, they do not perform well. So, while it is true that there are many efforts underway in this area, it is not the case that there are certain detectors that are vastly better than others.”

So long as you keep in mind that these tools aren’t foolproof, you can at least use them as another layer of protection for your business, in addition to educating and training your employees to recognize the signs of deepfakes.

For obvious reasons, we aren’t recommending any particular products; there are plenty of options to choose from based on your organization’s specific needs and budget. Some commonly known software include:

- Microsoft Video AI Authenticator

- Intel FakeCatcher

- Sensity AI

Final Thoughts on Preventing Deepfake Scams

Needless to say, the ongoing advancements in deepfake technologies are both wonderous and terrifying; each perspective depends on how these technologies are being used. This is why it’s now crucial for organizations and businesses to invest time and resources into hardening their defenses against these technologies now.

Educate your staff about what to look out for regarding deepfake media, and use other processes and technologies to help secure your organization’s most precious resources (data) and your bottom line.

Have additional thoughts or recommendations to share for securing your organization against deepfake scammers? Share your insights in the comments section below.

[ad_2]

Article link