Generative AI — a subset of artificial intelligence (AI) — technologies are taking the business world by storm. Over the past couple of years, we’ve seen immense growth in terms of AI technologies’ usage and adoption. There are many positives to generative AI technologies, but they also have their downsides as well. (We’ve written at length about the dangers of generative AI and what industry leaders are doing to address these concerns.)

CSO reports that one type of generative AI-generated content, deepfakes, is a top security threat as we get closer to the 2024 U.S. election cycle. About this, CSO quotes Cloudflare CSO Grant Bourzikas as saying:

“While they have been around for years, today’s versions are more realistic than ever, where even trained eyes and ears may fail to identify them. Both harnessing the power of artificial intelligence and defending against it hinges on the ability to connect the conceptual to the tangible. If the security industry fails to demystify AI and its potential malicious use cases, 2024 will be a field day for threat actors targeting the election space.”

Explore 20 generative AI statistics that aim to broaden your understanding of the technologies, adoption rates, benefits, costs, and risks as we prepare to leave 2023 and head into 2024.

Let’s hash it out.

Generative AI Adoption Statistics

This article aims to cover a variety of generative AI-related statistics. We’ve broken down the content into easy-to-follow subsections, starting with a look at how readily organizations and users are adopting these technologies.

1. ChatGPT’s Domain Peaked (So Far) at 1.81 Billion Visits in May 2023

Research data from Similarweb shows that OpenAI’s ChatGPT chatbot hit the ground running on Nov. 30, 2022, drawing 15.5 million visits within the first week. Visit rates multiplied rapidly, topping 1.81 billion visits in the month of May 2023 alone! The data shows a close second month was October 2023, which peaked at an estimated 1.7 billion visitors.

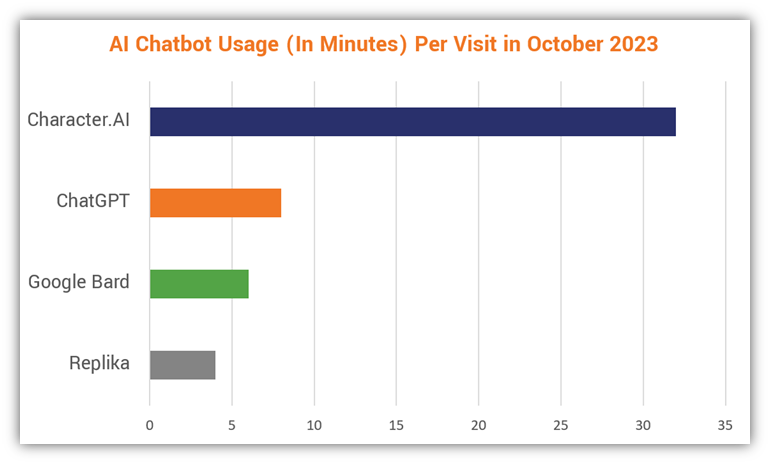

But ChatGPT isn’t the only mover and shaker in the game; there are other chatbots vying for attention. Similarweb’s data indicates that Character.AI has been getting the most attention in terms of user time spent using the chatbot:

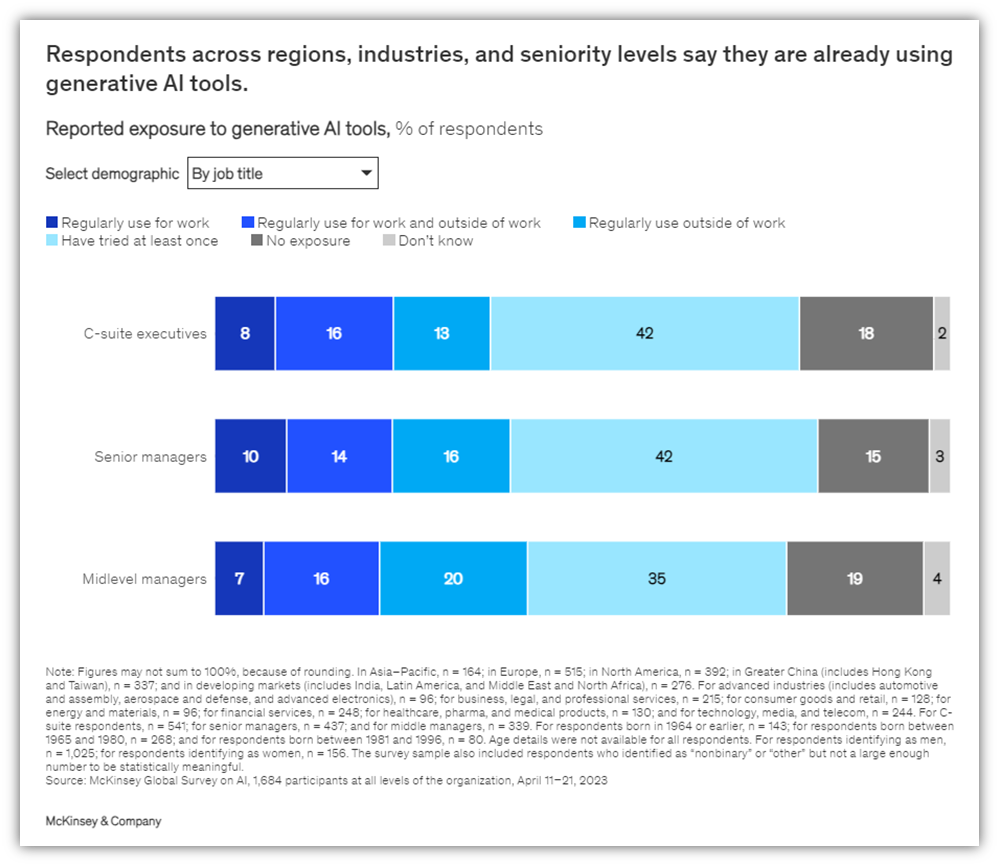

2. One-Third of Survey Respondents Use Generative AI Regularly

Research from McKinsey’s April 2023 survey shows that one in three respondents indicate that generative AI technologies are now used “regularly in at least one business function.” Among C-suite execs specifically, 37% indicate they use the tools regularly in their professional and personal lives.

3. 40% of Businesses Expect to Invest More in AI Because of Gen AI Advancements

Generative AI is also a hot topic for company leaders from a priority perspective. McKinsey’s report also shows that 28% of respondents say it’s one of the priorities on their boards’ agendas. Furthermore, two in five survey respondents indicate that their organizations will increase their general AI investments because of the increasing advances we’re seeing in generative AI with each passing day.

4. 88.3% of Companies Plan to Roll Out Gen AI-Related Policies but Only Most Feel Unprepared

Research from the AI Infrastructure Alliances indicates that most organizations have a forward-looking gaze toward generative AI implementation. Their report shows that nearly 9 in 10 companies have set their sights on implementing policies relating to its adoption and usage.

However, there are some sticking points that must be taken care of before any of that can happen. While 41.8% of respondents said they’re “fully staffed up and had the right budget to deliver on promises of LLMs and generative AI,” the majority (58.8%) indicate the opposite.

While it’s important for enterprises to be at the forefront of their industry, it’s crucial industry leaders remember that AI adoption isn’t a race; it’s a marathon. It’s best to take your time and ensure you have all of your pieces in place — staffing, budget, policies, procedures, etc. — as best you can before you start playing the game.

5. Millennials and Gen Z Represent 65% of Generative AI Users

It likely comes as no surprise to learn that the younger generations are more readily embracing generative AI technologies. A Salesforce survey of U.S., U.K. Australia, and India users found that 7 in 10 Gen Z respondents indicate using generative AI, and more than half (52%) report trusting it to “help them make informed decisions.”

This is an interesting perspective when you consider that poor decision-making costs businesses a lot of money every year. So, if generative AI technologies can help relieve some of the decision-making-related fatigue, then it’s all gravy, right? Not necessarily. There are plenty of concerns relating to these technologies, certainly not the least of which is that they may be spitting out inaccurate information.

6. 33% of IT Leaders Think Generative AI Isn’t All It’s Cracked Up to Be

Not everyone is on the generative AI bandwagon. Salesforce also reports that one-third of IT leaders regard generative AI as being “over-hyped.” Additionally, 79% of survey respondents are concerned that the technology opens the door to increased security risks and 73% are worried about bias-related issues.

Financial Generative AI Statistics

There are plenty of numbers to look at when it comes to generative AI technologies, and we can’t cover them all. There’s not enough time (or coffee) in the world to do that, and even if we had both, the truth is that technologies and prices will keep changing as newer and more advanced technologies hit the market.

With this in mind, we gathered several monetary-focused generative AI statistics you might be interested in knowing regarding these technologies.

7. Generative AI Is Poised to Add Upwards of $4.4 Trillion to the Global Economy

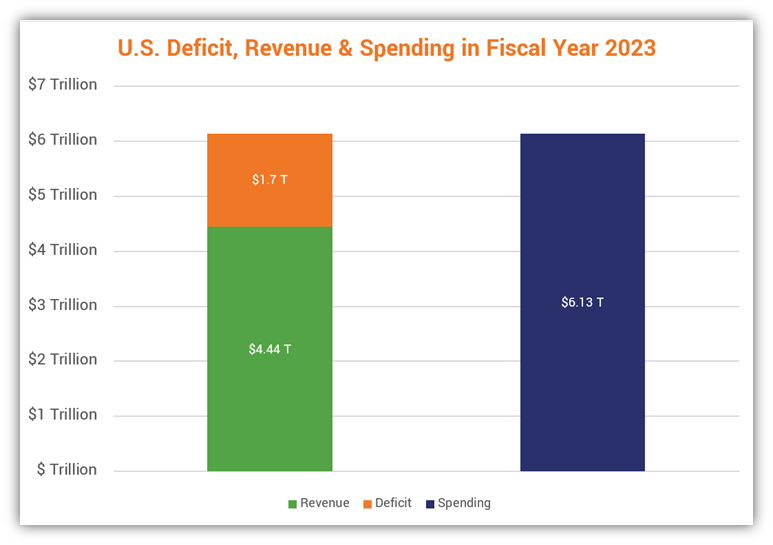

Generative AI presents an incredibly lucrative opportunity. Based on research relating to 63 use cases analyzed, McKinsey estimates that generative AI could pump between $2.6 and $4.4 trillion back into the global economy annually.

To help put this in perspective, consider that the annual revenue of the United States in fiscal year 2023 totaled $4.44 trillion. That’s all of the monies included via various taxes (individual income, payroll, corporate income, excise, etc.)

8. 63% of Organizations Lost at Least $50 Million Due to AI/ML Governance Failures

The AI Infrastructure Alliance reports some staggering data relating to losses stemming from failed AI/ML governance. According to their survey of 1000+ companies with $1+ billion in revenues, nearly one in three organizations with existing AI deployments experienced significant losses when they failed to manage those systems correctly.

- 18% report losing between $5 million and $10 million;

- 19% indicate losing $10-$50 million;

- 29% shared losing between $50 million and $100 million;

- 24% report losing between $100-200 million; and

- 10% indicate they lost more than $200 million.

9. A Single GPU Chip Can Cost $10,000; Some May Cost $40,000+

CNBC reports the industry’s most popular graphics processing unit (GPU) chip, the NVIDIA A100, can easily cost $10,000 for a single chip. Another article shows that its successor, NVIDIA’s H100, can cost more than four times that amount.

Now, consider that companies don’t generally buy a single chip. Rather, they purchase multi-chip systems like NVIDIA’s DGX A100 or the DGX H100, the latter of which costs upwards of $47,000 (depending on where you buy it). Each DGX H100s houses 8 GPUs, and up to 32 DGX H100 systems can be connected to run up to 256 GPUs simultaneously.

10. $700,000: The Estimated Daily Cost of Operating ChatGPT

So, how much does it cost a major generative AI provider to operate their technologies? According to the semiconductor and research analysis firm SemiAnalysis, “ChatGPT currently costs ~$700,000 a day to operate in hardware inference costs.”

Now, keeping in mind that generative AI tools like ChatGPT require training in addition to inference (i.e., the process of applying rules to live data to test how effectively the generative AI system provides actionable data), there are other costs as well, including large language model (LLM) training costs, staffing, training for those employees, and a litany of additional costs that quickly add up.

Generative AI Deepfake Statistics

Whether we like it or not, deepfake content is here to stay. Deepfakes are images, audio, and video recordings that are generated using AI technology.

11. Deepfake-Based Identity Fraud Doubled From 2022 to Q1 2023

Research from Sumsub shows that identity fraud causes in the U.S. in Q1 2023 alone jumped from 0.2% to 2.6% and from 0.1% to 4.6% in Canada. While this sounds like a small amount, keep in mind that this will likely increase more as technologies become more widely available and adopted.

As described by Sumsub’s Head of AI and ML, Pavel Goldman-Kalaydin, in the article:

“Deepfakes have become easier to make and, consequently, their quantity has multiplied, as is also evident from the statistics. To create a deep fake, a fraudster uses a real person’s document, takes a photo from it, and turns it into a 3D persona. Anti-fraud and verification providers who do not constantly work to update deepfake detection technologies are lagging behind, putting both businesses and users at risk. Upgrading deepfake detection technology is an essential part of modern effective verification and anti-fraud systems.”

In September, three federal agencies (NSA, CSI, and CISA) jointly released a Cybersecurity Information Sheet (CSI) titled “Contextualizing Deepfake Threats to Organizations.” This document serves as a resource to help make organizations aware of synthetic digital media-related trends, threats, and attack techniques. In addition to recommending the use of passive AI-detection technologies and training employees on what to look out for, the agencies also recommend:

- Using digital provenance technologies to prove the media’s authenticity. We’re already seeing the use of universal standards like C2PA coming into effect as leading manufacturers increasingly embrace it in their products.

- Securing essential and sensitive communications. Public key cryptography (e.g., encryption for public channels) through the use of SSL/TLS certificates and email encryption certificates is the answer.

- Using real-time digital identity verification. This could include using of public key infrastructure (PKI)-based digital identity solutions, such as client authentication certificates.

Public key infrastructure, along with a standard like C2PA, together help make it possible for businesses to deliver trusted content via secure, trusted channels.

12. 96% of Deepfake Videos Are Non-Consensual Pornography

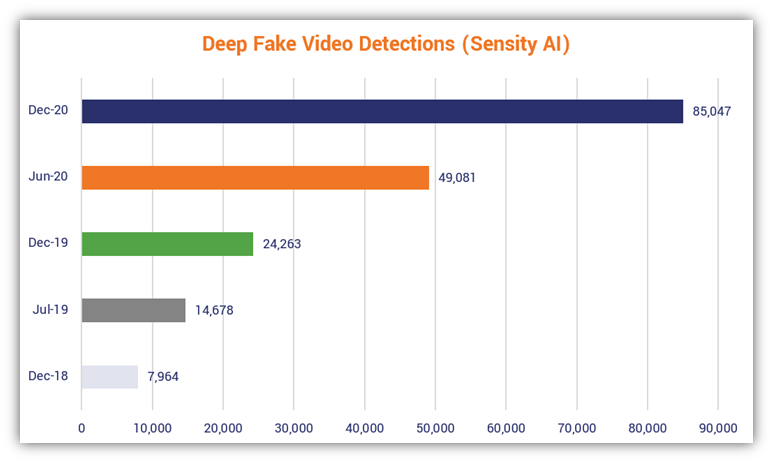

Deeptrace AI (now Sensity AI) found in a 2019 study that of the 14,678 deepfake videos discovered online, the overwhelming majority (96%) were categorized as non-consensual porn. Additional research by Sensity AI shows that as of December 2020, the number of deepfakes more than quintupled, surpassing 85,000. Essentially, the number of deepfake videos online has virtually doubled every six months:

13. 71% of Users Are Unaware of Deepfake Media

Iproov, a biometric tech provider, says that seven in 10 global consumers indicate having no knowledge of deepfakes. Less than one-third of the company’s 2022 survey respondents say they are “aware” of deepfakes.

Of course, the question that comes to mind is whether those respondents are confident they can recognize a deepfake if they see one. Mexican respondents sure seem to believe so! 82% of Mexican respondents indicated they would be able to recognize deepfakes for what they are. Germans, on the other hand, were the least confident, with only 43% saying they would be able to tell the difference.

The pros surveyed in the U.S. and U.K. fell somewhere in between, with a little more than 40% of U.S. respondents and ~45% of U.K. respondents saying they likely couldn’t tell the difference.

Whether or not people can reliably detect deepfakes is still up in the air. Data from researchers at the Center for Humans and Machines, Max Planck Institute for Human Development in Germany and the University of Amsterdam indicates otherwise.

14. When Put to the Test, One-Quarter of Survey Respondents Can’t Identify Deepfake Audio

Did you know that one in four people can’t ID a deepfake audio sample? Research from a study of 529 individuals (published on PLOS One) shows that one-quarter of survey respondents couldn’t differentiate examples of deepfake audio from real audio recordings.

Now, let’s go out on a limb and imagine this survey group represents the population of the U.S. That would mean that at least 25% of the people in the U.S. are susceptible to falling for deepfake recordings. That’s approximately 83,952,412 people when you consider that the U.S. Census’s population clock estimate was 335,809,648 people as of Dec. 4, 2023!

15. 30% of Indians Say At Least One in Four Videos They Watch Online Are Fake

A LocalCircles survey of more than 32,000 Indians from 319 districts shows that there’s increasing recognition of deepfake media among the country’s population. 10,838 of the respondents indicated that they later discovered after the fact that 25% or more of the videos they watched on their smartphones, tablets, and laptops were fake.

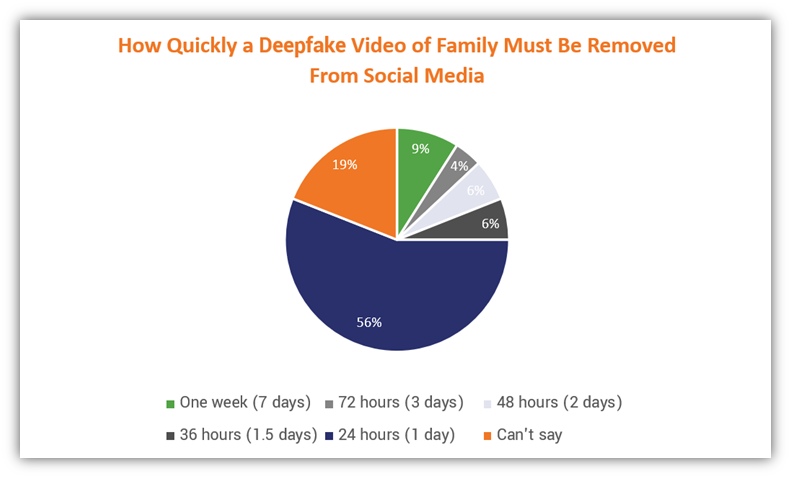

Furthermore, more than half (56%) of the survey’s respondents said that social media platforms should be required to remove deepfake videos of family members within 24 hours of when a removal request and details about why it should be removed have been submitted.

Although generative AI represents an incredible technological evolution that companies can capitalize on and use to their advantage, it’s not all sunshine and rainbows. There’s another side to generative AI relating to your organization that you may want to be aware of…

16. 51% of Security Professionals May Leave Their Jobs Due to Generative AI-Related Stress

A survey of 650+ senior security operations professionals in the U.S., conducted by Sapio Research on behalf of Deep Instinct, shows that 55% of respondents have increased stress due to generative AI. The top cause of that stress? Limited staffing and resources relating to adopting new technologies, including generative AI.

What makes matters worse is that more than half indicate they’re ready to throw in the towel over the next year due to the stress.

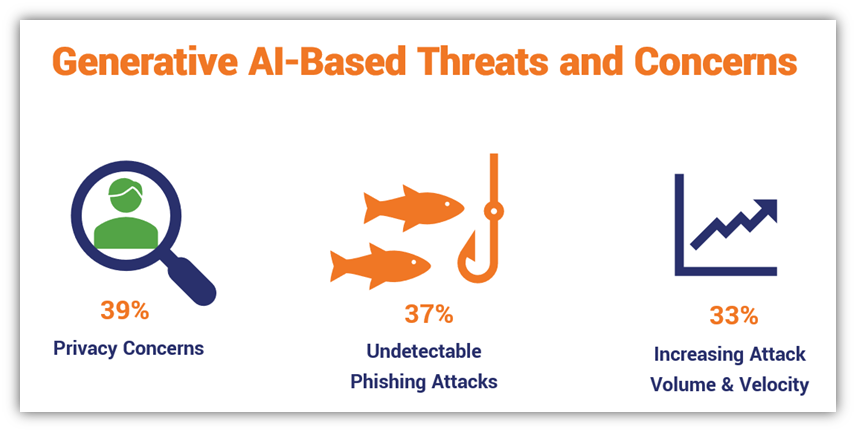

17. 46% of Respondents Indicate Generative AI Increases Their Organizations’ Vulnerabilities

Although the majority (69%) of the Sapio Research/Deep Instinct survey respondents say they’ve adopted generative AI tools within their organizations, nearly half of the survey’s respondents indicate that generative AI also represents “a disruptive cybersecurity threat” that may leave them more vulnerable to attacks.

What were the three leading areas of concern?

18. 85% of Security Professionals Attribute Cyber Attack Increases to Generative AI

Four in five security professionals surveyed as part of the Sapio Research/Deep Instinct study indicate that they believe attacks have increased as a result of threat actors using generative AI tools and technologies. Considering that 75% of the survey’s respondents say that attacks have increased over the past 12 months, it doesn’t bode well for businesses.

19. 32% of Organizations Report Banning Generative AI Technologies

Not everyone is happy with their employees using generative AI technologies. Nearly one-third of ExtraHop’s survey respondents indicate they’re so concerned about vulnerabilities and security concerns relating to the tools that they’re banned outright.

This may leave you wondering: In which industries are organizations using generative AI tools the most? Technology leads the way with 85% of survey respondents indicating employees “frequently or sometimes use” gen AI tools or LLMs, followed by healthcare (735), transportation (72%), manufacturing (70), and retail (69%).

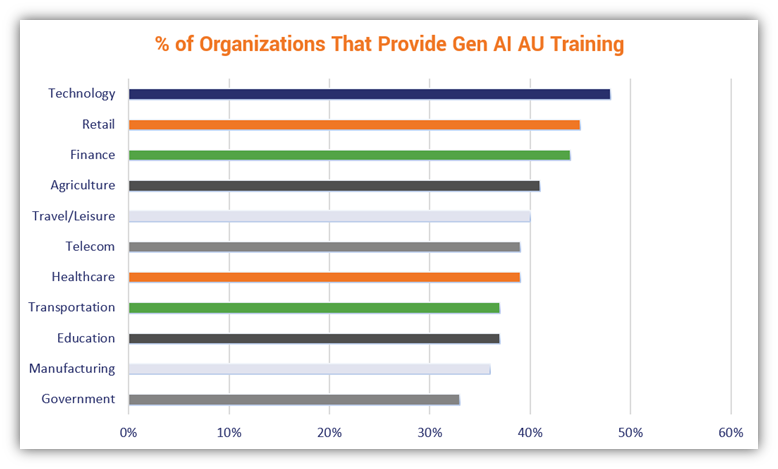

20. Less Than Half of Organizations Across All Industries Provide Gen AI Acceptable Use Training

The ExtraHop survey provided some other useful nuggets of information. One of these insights provides perspective on how organizations are — or are not — trying to prepare their employees for what they’ll face in terms of deepfake media.

The percentage of organizations offering generative AI acceptable use (AU) training varies quite a bit by industry, ranging from 33% of government organizations to nearly half (48%) of technology businesses:

Sadly, no industry across the board had the majority of organizations providing that type of training.

Do these generative AI statistics fall in line with what you were expecting to read? Be sure to share your insights and thoughts in the comments section below!

[ad_2]

Article link